Googlescraper

Content

Search Engine Scraper

Beauty Products & Cosmetics Shops Email List and B2B Marketing Listhttps://t.co/EvfYHo4yj2

— Creative Bear Tech (@CreativeBearTec) June 16, 2020

Our Beauty Industry Marketing List currently contains in excess of 300,000 business records. pic.twitter.com/X8F4RJOt4M

With regular search listings, Google usually showed enough info for a searcher to determine in the event that they want to go to an internet site and, if that's the case, they’d click on through. But the adjustments over the previous few years (which Bing also does) have been to offer actual solutions drawn from sites, in order that there’s no need to click via. Perhaps it’s SEO’s “Oreo moment,” a tweet regarding seo that’s gained nearly as much attention as Oreo’s well-known Super Bowl blackout tweet. But the topic was an ideal storm of goodness — a real-life instance of Google doing the kind of factor in search it seems to be telling others to not do. Some scraper websites are created to generate income by utilizing advertising programs.

Search Engine Harvester

Your proxy supplier will doubtless get upset if you get too many of their proxies blacklisted, so it’s best to cease scraping with that proxy IP earlier than this happens. Banned means you gained’t be capable of apply it to Google; you’ll just get an error message.

Grow your wholesale CBD sales with our Global Hemp and CBD Shop Database from Creative Bear Tech https://t.co/SQoxm6HHTU#cbd #hemp #cannabis #weed #vape #vaping #cbdoil #cbdgummies #seo #b2b pic.twitter.com/PQqvFEQmuQ

— Creative Bear Tech (@CreativeBearTec) October 21, 2019

Search Engine Harvester Tutorial

Scraper API is a device that handles proxies, browsers, and CAPTCHAs so builders can get the HTML of any internet page with a simple API call. Their documentation can also be excellent, making it very easy to stand up and running fast. The one draw back to Zenserp, like so many others on this list is price. At $380 for a hundred,000 API calls this isn’t an answer for somebody who must extract tens of millions of search outcomes per 30 days. Not solely that however the API itself boasts a full range of features that lets you scrape all types of SERP information, together with natural, paid, answer box, featured snippet, high story, local maps, etc.

Search Engine Scraping

Google would ban any consumer who tries to routinely scrape their search engine outcomes. The Suggest Scraper can generate 1000's of natural search relevant phrases to be scraped. Scraping search engines like google turned a serious business in the past years and it stays a really challenging task. This advanced PHP source code is developed to energy scraping based projects. You don't need to be an Xpath genius because Data Miner has group generated knowledge extraction rules for frequent web sites.

Contents

Scrape impressions on advertisements usually don’t add as much as a lot, however the search engine may be opening the flood gates to compete. Yahoo! is simpler to scrape than Google, however still not very straightforward. And, as a result of it’s used much less often than Google and other engines, applications don’t all the time have one of the best system for scraping it.

Methods Of Scraping Google, Bing Or Yahoo

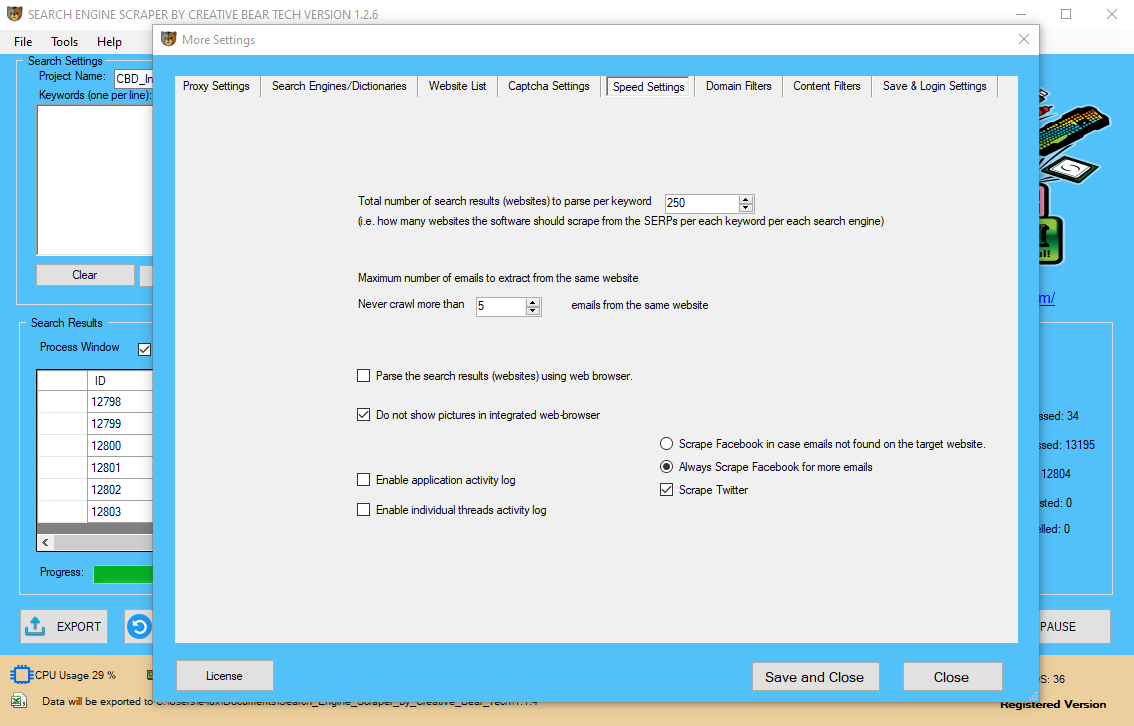

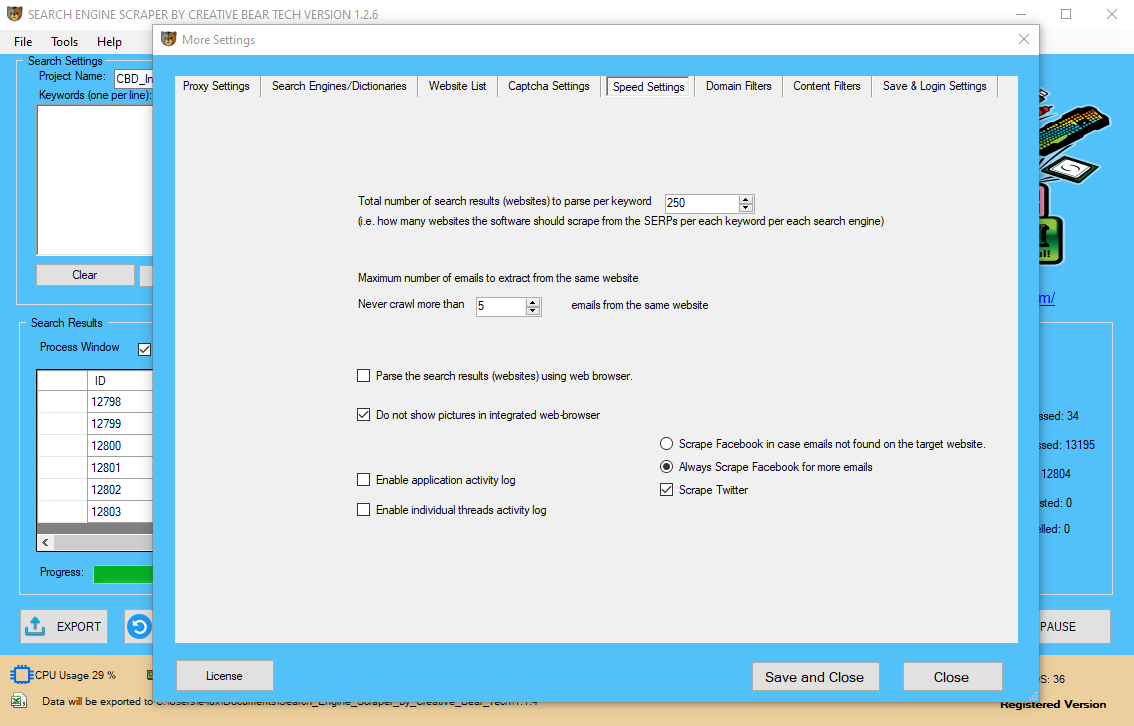

No concurrency means only one browser/tab is searching on the time. Websites typically block IP addresses after a certain quantity of requests from the same IP handle. So the maximal amount of concurrency is equal to the variety of proxies plus one (your personal IP).

No concurrency means only one browser/tab is searching on the time. Websites typically block IP addresses after a certain quantity of requests from the same IP handle. So the maximal amount of concurrency is equal to the variety of proxies plus one (your personal IP).

Search Engine Scraper and Email Extractor by Creative Bear Tech. Scrape Google Maps, Google, Bing, LinkedIn, Facebook, Instagram, Yelp and website lists.https://t.co/wQ3PtYVaNv pic.twitter.com/bSZzcyL7w0

— Creative Bear Tech (@CreativeBearTec) June 16, 2020

It can’t cease the method; folks scrape Google each hour of the day. But it could possibly put up stringent defenses that cease individuals from scraping excessively. Being top dog means Google has the largest popularity to defend, and it, normally, doesn’t want scrapers sniffing round. I received’t get into all the search engines on the market — that’s too many.

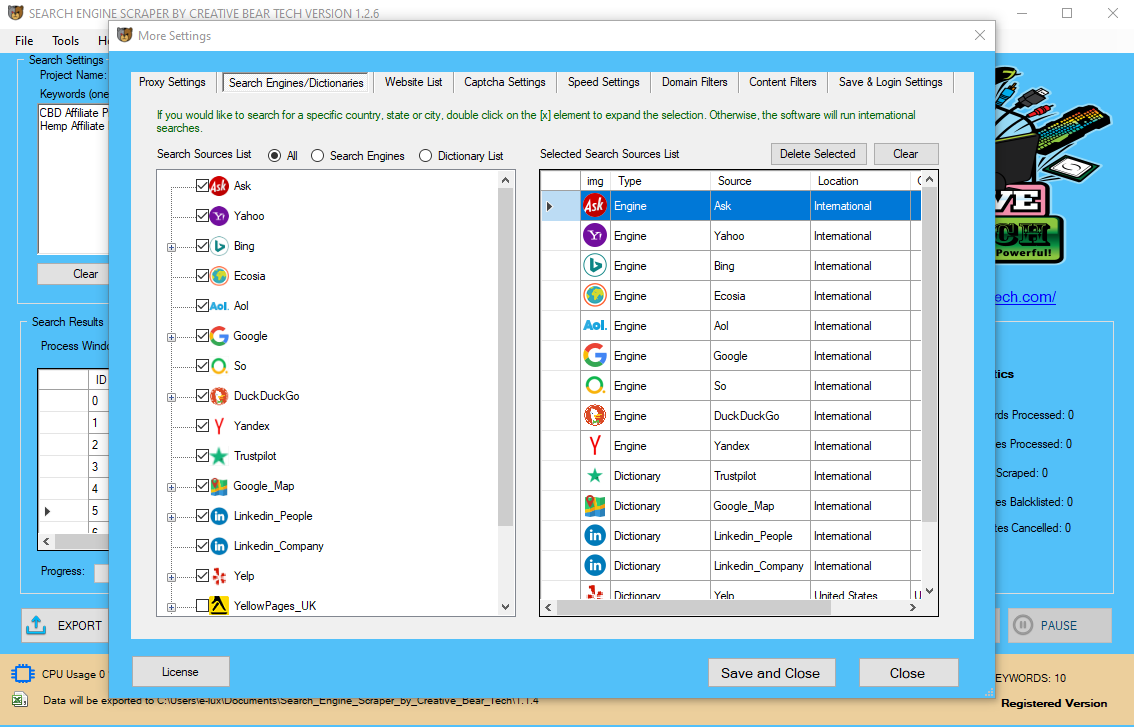

- Additionally, you can also get the software program to check the physique textual content and html code for your key phrases as properly.

- For instance, there are many manufacturers that do not essentially contain the keywords within the area.

- The limitation with the area filters mentioned above is that not every website will necessarily comprise your keywords.

- The software will not save information for web sites that wouldn't have emails.

USA Marijuana Dispensaries B2B Business Data List with Cannabis Dispensary Emailshttps://t.co/YUC0BtTaPi pic.twitter.com/clG0BmdFzd

— Creative Bear Tech (@CreativeBearTec) June 16, 2020

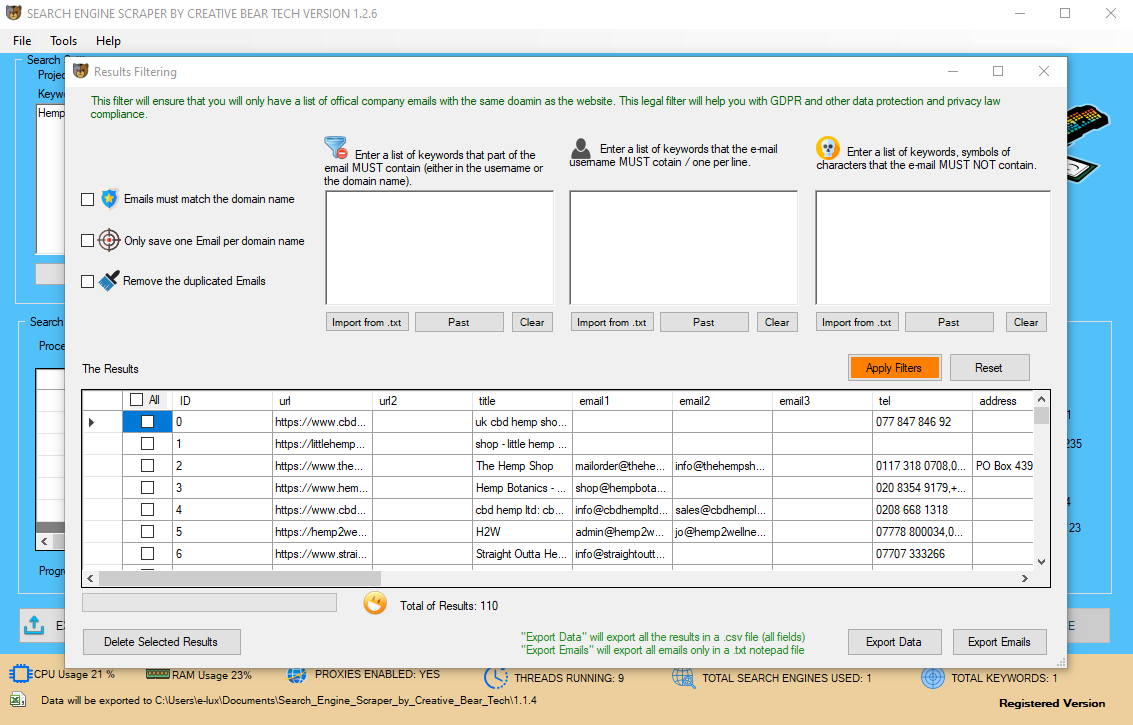

In such case, they are called Made for AdSense websites or MFA. This derogatory term refers to websites that don't have any redeeming worth besides to lure visitors to the website for the only function of clicking on ads. He is at all times experimenting with new codecs and looking for artistic ways to supply, optimize and promote content. He previously wrote for CanadaOne Magazine and helped create and implement on-line advertising methods at Mongrel Media. Read extra on the way to use the Twitter List Scraper in this blog publish. Twitter lists are consumer-generated groups of individual users on Twitter, usually based mostly on a typical curiosity or theme. With the Twitter List Scraper, simply paste in URLs of the member pages, and the tool will return Twitter usernames and profile links of all of the members. In the approaching weeks, I will take some time to update all performance to the most recent developments. This encompasses updating all Regexes and modifications in search engine behavior. After a few weeks, you can expect this project to work once more as documented right here. Scrapy Open supply python framework, not devoted to search engine scraping however frequently used as base and with a lot of users. This topic is a giant one, and one I won’t get into significantly in this article. However, it’s essential to realize that after you obtain the software and addContent the proxies, you’ll need to adjust the parameters of the scrape. But if you wish to do advance scraping it helps to know the fundamentals of xpath and CSS and JQuery choose, regular expression (regex) adn debugging with chrome inspector or webstorm. I also suggest tailoring scraping settings (like retry rates) if you start to see captchas to maximize your yield of knowledge. It’s necessary to keep away from blacklisting proxies as a lot as possible.  Blacklisted means the IP itself will go on a giant record of “no’s! If you proceed a brand new scrape with that IP, which Google has now flagged, it's going to likely get banned from Google, and then blacklisted. When it does detect a bot it will throw up captchas initially. These are these annoying guessing games that attempt to tell when you’re human. They will most often stump your proxy IP and software, thereby stopping your scrape. Enter your Google search phrase below to get a CSV of the primary 500 results into a CSV file that you could then use with Excel or another software that can handle comma separated values. One option to reduce the dependency on one company is to make two approaches on the same time. Using the scraping service as main supply of information and falling back to a proxy based answer like described at 2) when required. Recently a customer of mine had an enormous search engine scraping requirement however it was not 'ongoing', it is more like one big refresh per thirty days. se-scraper should be capable of run without any concurrency at all. Add public proxies scraper tool, auto-verify and verify the general public proxies, automatically take away non-working proxies and scrape new proxies every X variety of minutes. “Enter an inventory of keywords that the e-mail username must comprise” – right here our goal is to extend the relevancy of our emails and scale back spam on the same time. For instance, I could need to contact all emails starting with data, howdy, sayhi, and so forth. "Google blocked us, we'd like more proxies ! Make certain you did not harm the IP administration capabilities. Consider altering key phrases and lowering request charges. If you don't accept the search engine TOS you shouldn't have authorized threats with passively scraping it.

Blacklisted means the IP itself will go on a giant record of “no’s! If you proceed a brand new scrape with that IP, which Google has now flagged, it's going to likely get banned from Google, and then blacklisted. When it does detect a bot it will throw up captchas initially. These are these annoying guessing games that attempt to tell when you’re human. They will most often stump your proxy IP and software, thereby stopping your scrape. Enter your Google search phrase below to get a CSV of the primary 500 results into a CSV file that you could then use with Excel or another software that can handle comma separated values. One option to reduce the dependency on one company is to make two approaches on the same time. Using the scraping service as main supply of information and falling back to a proxy based answer like described at 2) when required. Recently a customer of mine had an enormous search engine scraping requirement however it was not 'ongoing', it is more like one big refresh per thirty days. se-scraper should be capable of run without any concurrency at all. Add public proxies scraper tool, auto-verify and verify the general public proxies, automatically take away non-working proxies and scrape new proxies every X variety of minutes. “Enter an inventory of keywords that the e-mail username must comprise” – right here our goal is to extend the relevancy of our emails and scale back spam on the same time. For instance, I could need to contact all emails starting with data, howdy, sayhi, and so forth. "Google blocked us, we'd like more proxies ! Make certain you did not harm the IP administration capabilities. Consider altering key phrases and lowering request charges. If you don't accept the search engine TOS you shouldn't have authorized threats with passively scraping it.

The guide How To Scrape Google With Python goes into extra detail on the code if you're interested. Another choice to scrape Google search outcomes utilizing Python is the one by ZenSERP. Today, I bumped into one other Ruby dialogue about the way to scrape from Google search results. This provides a great different for my problem which will save all the trouble on the crawling half. You can try out Scraper APIs very beneficiant free trial with 5,000 free requests here, and if you need to scrape greater than 3,000,000 pages per 30 days then contact our sales group with this type. Whereas the previous strategy was applied first, the later approach appears rather more promising compared, as a result of search engines like google haven't any simple way detecting it. The outcomes (partial outcomes, as a result of there have been too many keywords for one IP handle) can be inspected in the file Outputs/advertising.json. Update the next settings in the GoogleScraper configuration file scrape_config.py to your values. This project is back to reside after two years of abandonment.

Chillax Saturday: strawberry and mint fizzy bubble tea with Coconut CBD tincture from JustCBD @JustCbd https://t.co/s1tfvS5e9y#cbd #cbdoil #cbdlife #justcbd #hemp #bubbletea #tea #saturday #chillax #chillaxing #marijuana #cbdcommunity #cbdflowers #vape #vaping #ejuice pic.twitter.com/xGKdo7OsKd

— Creative Bear Tech (@CreativeBearTec) January 25, 2020

It ensures optimal performance for scraping, plus an optimal experience for you and on your supplier. Trial and error over time has made this a constant fact for me. It’s not entirely clear why this is the case, and we’ll never know. One idea is that Bing doesn’t wish to block any guests as a result of it reduces total page views, which means much less impressions on adverts general. Even bash scripting can be utilized along with cURL as command line device to scrape a search engine. The second layer of defense is a similar error page however with out captcha, in such a case the consumer is totally blocked from utilizing the search engine until the short-term block is lifted or the consumer adjustments his IP. HTML markup changes, relying on the methods used to harvest the content of a website even a small change in HTML data can render a scraping tool damaged until it was updated. You can add country primarily based search engines like google and yahoo, and even create a customized engine for a WordPress website with a search field to reap all of the publish URL’s from the web site. Trainable harvester with over 30 search engines and the power to simply add your individual search engines like google to harvest from virtually any web site.